Illustration by iStock; Security Management

Organizations around the world are in various stages of their artificial intelligence (AI) deployment journey.

Some—especially those in North America—are taking a more aggressive approach to adopting the technology. A recent survey sponsored by OpenText and conducted by the Ponemon Institute asked 1,878 IT and IT security practitioners in all regions of the world about how their companies are using AI for cybersecurity and business purposes. They also asked practitioners about how they addressing risks created by AI, generative AI, and agentic AI.

The survey defined agentic AI as a “type of AI that can autonomously make decisions, take actions, and learn on its own to achieve specific goals.” The technology is “characterized by autonomy, the ability to initiate and complete tasks without constant oversight, and reasoning, where sophisticated decision-making is based on context.”

Ponemon published the findings in Managing Risks and Optimizing the Value of AI, GenAI, and Agentic AI on 23 March.

Below are some top takeaways from the survey’s findings on agentic AI.

1. Risk Management Concerns Are Delaying Deployment

Just 38 percent of respondents said their organizations have fully adopted (15 percent) or partially adopted (23 percent) agentic AI. Those that are using the technology share they have adopted it to perform sequences of tasks like coding, email response, and data queries. But nearly half of respondents (43 percent) said their organization lacks the proper risk and security controls to manage agentic AI.

When asked why their organization would not adopt agentic AI, respondents cited:

- A lack of proper risk and security controls – 43 percent

- Complex system integration – 38 percent

- Data quality issues – 33 percent

- High costs to implement and maintain – 32 percent

- Unclear business value – 28 percent

- Lack of in-house expertise – 26 percent

2. Agentic AI Isn’t Always Up to the Task

Less than half of those who have deployed agentic AI at their organizations (44 percent) said that it’s highly capable of automating manual tasks for security teams with limited human intervention. Just 39 percent of respondents added that agentic AI is “highly effective in removing human error by systemically retrieving comprehensive threat intelligence.”

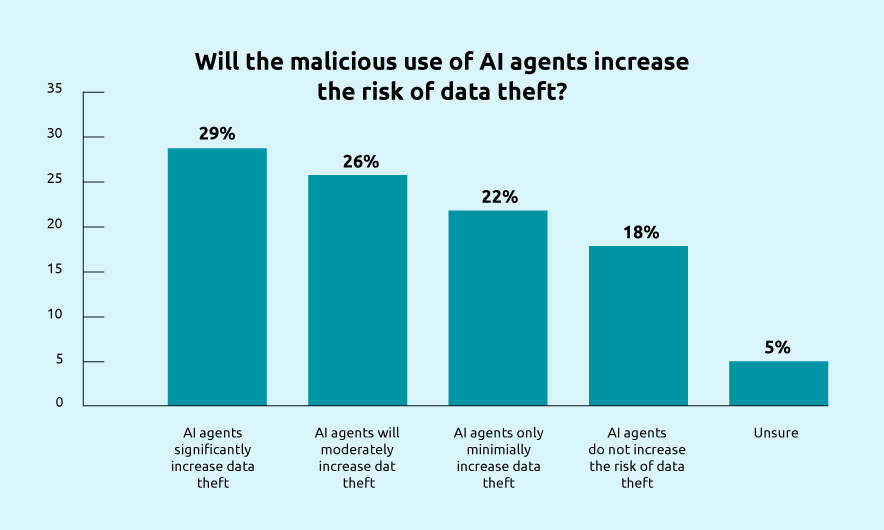

3. The Major Risk of Corporate Data Theft Is Problematic

While agentic AI can help prevent data theft, the malicious use of the technology “significantly increases the risk of data theft,” the survey found. More than half of respondents (55 percent) said they believe AI agents will “significantly increase data theft (29 percent) or moderately increase the risk of data theft (26 percent).”

4. AI Agents Could Be Mucking Up Intrusion Detection

Sixty-six percent of respondents using agentic AI said the agents make intrusion detection difficult, with just 12 percent of respondents saying AI agents do not impede intrusion detection.

“The malicious use of AI agents significantly complicates intrusion detection by enabling faster, stealthier attacks that mimic legitimate behavior, creating convincing deepfakes, automating complex reconnaissance, and poisoning training data,” according to the report.

5. The C-Suite and Staff Are Not Aligned on AI Risks

The survey also assessed that there are significantly differing views between C-level respondents and staff- or technician-level respondents in the same organization. When asked if their organizations have tools or processes in place to respond to the misuse of tools that result in data leakage and prompt injections, 65 percent of C-level respondents said yes compared to just 35 percent of staff.

“Prompt injection is a critical security vulnerability in large language models (LLMs) where malicious, crafted inputs trick the model into ignoring its original developer instructions and executing unauthorized commands,” the survey said. “By exploiting the inability of LLMs to distinguish between instructions and data, attackers can bypass safeguards, leak sensitive data, or manipulate outputs.”

C-level respondents were also more optimistic about the future ability of AI systems to reason and make autonomous decisions to avoid misuse. Forty percent of staff/technicians said this would be possible, compared to 56 percent of C-level positions.

Megan Gates is editor-in-chief of Security Technology. Connect with her at [email protected] or on LinkedIn.