NetApp dropped three major announcements this week, two global, and one focused on Australia and New Zealand, all on the same day – the day of the NetApp INSIGHT Xtra conference in Sydney.

That’s not a coincidence. It’s a coordinated signal: the company that built its reputation on enterprise storage wants you to know it’s now an AI infrastructure company.

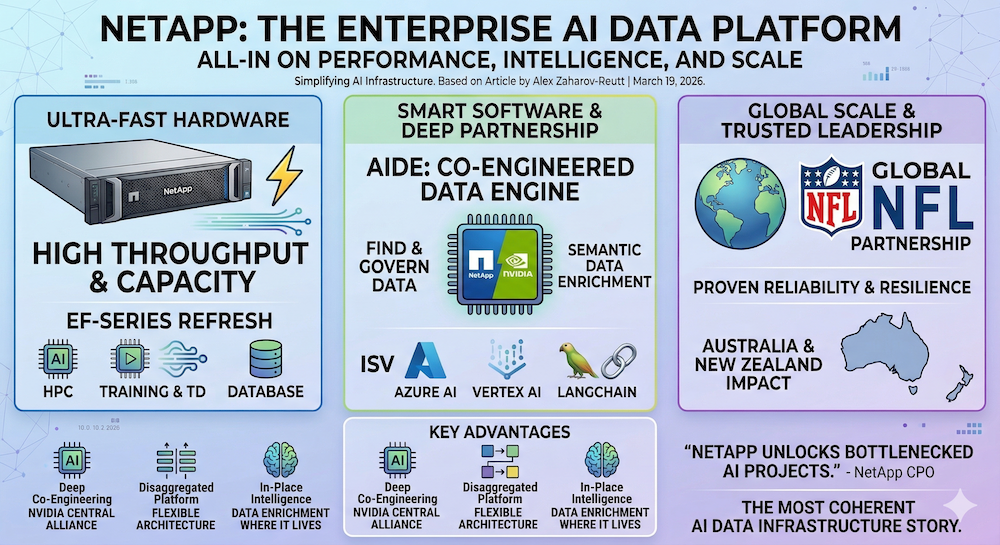

The trio of releases covers new high-performance hardware (the EF50 and EF80), a deepened NVIDIA partnership anchored by a co-engineered AI Data Engine, and a regional strategy for Australia and New Zealand that uses the NFL (yes, American football) as its proof point. Taken individually, each is a solid product story.

Taken together, they sketch out something more ambitious: a company trying to own every stage of the AI data pipeline, from raw storage to semantic enrichment to agentic workflows.

The Hardware: 110GBps, 2U, and a 250% Jump

Let’s start with the metal. The new EF50 and EF80 are NetApp’s refresh of the EF-Series line, purpose-built for workloads that chew through data at scale: AI model training, HPC simulations, large transactional databases. The headline numbers are genuinely impressive. Over 110GBps of read throughput and 55GBps of write throughput, crammed into a 2U form factor with 1.5PB of capacity. That’s a 250% improvement over the previous generation.

Power efficiency sits at 63.7GBps per kilowatt. For data centre operators watching their energy bills climb, that number matters more than any throughput benchmark.

The systems are designed to pair with parallel file systems like Lustre and BeeGFS, which is where the AI angle sharpens. GPU clusters are only as fast as the data you can feed them. If your storage can’t keep up, those expensive NVIDIA chips sit idle, burning watts and money. The EF-Series is engineered to be the high-bandwidth scratch space that keeps GPUs saturated.

“The refreshed NetApp EF-Series delivers the throughput and capacity businesses need to scale high-powered workloads that transform data into insights and outcomes.”

Clayton Vipond, Senior Solution Architect, CDW

NetApp is targeting a specific buyer here: neocloud providers, sovereign AI cloud operators, movie studios with massive media libraries, and anyone running HPC at scale. The pitch is performance without complexity.

With over 1 million EF-Series installations already deployed, NetApp can credibly claim battle-tested reliability. The question isn’t whether the hardware works. It’s whether NetApp can make the sales motion fast enough in a market where every hyperscaler and startup is racing to sell AI-optimised storage.

This infographic created by Gemini Nano Banana 2, based on this article, which continues below – please read on!

The Software: AIDE and the NVIDIA Alliance

The EF-Series is the muscle. The AI Data Engine (AIDE) is the brain. And this is where NetApp’s strategy gets genuinely interesting.

AIDE is a co-engineered effort with NVIDIA, integrated into NVIDIA’s AI Data Platform reference design.

It’s designed to solve what is arguably the biggest unsolved problem in enterprise AI: finding, understanding, and governing data scattered across global estates. Most enterprises don’t have a data problem. They have a finding-the-right-data problem.

Here’s how AIDE works. It automatically creates a global metadata catalog that goes beyond standard file system metadata. It actively analyses file content, semantically enriches the metadata in place (without moving the data), and keeps it continuously updated.

That means enterprises can curate training datasets, govern sensitive information, and feed the freshest data into AI pipelines without the usual mess of ETL jobs and data copies.

“Despite massive investments and market pressures to leverage AI for improved productivity and enhanced business decision making, data challenges are bottlenecking projects before they even reach production.”

Syam Nair, Chief Product Officer, NetApp

The NVIDIA partnership runs deep. NetApp will support NVIDIA STX, a modular rack-scale storage reference architecture for agentic AI, built with NVIDIA Vera Rubin and BlueField-4 DPUs.

AIDE will also run on the new NVIDIA RTX PRO 4500 and RTX PRO 6000 Blackwell Server Edition GPUs announced at GTC this week.

The roadmap through the summer of 2026 includes hybrid cloud support, multimodal data capabilities (think: images and video, not just text), and agentic AI workflows with governed access controls.

ISV integrations are coming too: Microsoft Azure AI applications, Google Cloud Vertex AI, and LangChain are all on the near-term list. NetApp is clearly positioning AIDE as the connective tissue between enterprise data and whatever AI framework a customer wants to run.

“FlexPod AI brings together the full stack of compute, storage, networking, security and observability capabilities our customers need to anchor their AI factories. Using NetApp AIDE with this solution moves AI to our customers’ data, right where it lives.”

Jeremy Foster, SVP and GM, Cisco Compute

The Regional Play: Australia, New Zealand, and the NFL

The third announcement centres on NetApp’s INSIGHT Xtra event in Sydney, where the company laid out its vision for the ANZ market. The core message: enterprises in the region are stuck between AI ambition and data fragmentation. Legacy environments, multiple clouds, edge deployments. Data spread everywhere, governed nowhere.

NetApp’s answer is its full product stack: AFX for disaggregated storage that scales capacity and performance independently, AIDE for AI data readiness, the EF-Series for raw throughput, and Ransomware Resilience for ONTAP-based cyber protection. It’s a comprehensive pitch, and in the ANZ context (where sovereign data and cyber resilience are especially hot topics), it lands well.

The unexpected star of the show? The NFL. NetApp is the league’s official Intelligent Data Infrastructure Partner, and the timing is potent: the NFL recently announced 9 international games for 2026, including the first-ever regular season game in Melbourne. The league’s data challenge (real-time fan engagement across time zones, personalised experiences at global scale) turns out to be a surprisingly effective analogy for what every enterprise faces.

“With NetApp-powered Intelligent Data Infrastructure, we are able to consistently deliver incredible and tailored fan experiences around the world whether you’re tuning in for the Super Bowl in the US, attending a watch party in London, or attending a game at the MCG in Melbourne.”

Charlotte Offord, General Manager, NFL Australia & New Zealand

It’s a smart marketing move. Enterprise storage is notoriously difficult to make exciting. Bolting the NFL’s global expansion story onto your data platform pitch gives it narrative energy that “250% throughput improvement” alone can’t deliver.

Reading Between the Lines

Three announcements, one day, one city. The coordination tells you something about how NetApp sees itself right now.

First, the NVIDIA relationship is clearly central to NetApp’s AI strategy. Co-engineering AIDE, integrating with the AI Data Platform reference design, supporting STX, running on Blackwell GPUs. That’s not a casual partnership.

NetApp has essentially welded itself to the NVIDIA ecosystem, which is a calculated bet that NVIDIA’s dominance in AI compute will continue. Given NVIDIA’s current position, it’s a reasonable bet. But it does create dependency.

Second, the disaggregation theme is worth watching. NetApp’s data platform separates storage, services, and control so they can scale independently. That’s an architectural bet against the monolithic storage appliance, and it aligns with how cloud-native enterprises already think about infrastructure.

The AFX systems embody this philosophy. If disaggregated storage catches on broadly (and the trend lines suggest it will), NetApp’s early commitment could pay significant dividends.

Third, AIDE’s in-place semantic enrichment is a genuinely clever approach. The traditional method of preparing data for AI involves copying it to new locations, running transformation pipelines, and managing yet another set of data stores.

AIDE’s promise of enriching metadata where the data already lives eliminates copies, reduces security exposure, and keeps data current. If it works as described at production scale, it addresses one of the most painful friction points in enterprise AI adoption.

The risk? Execution. AIDE is launching to “lighthouse” customers this month with broad availability promised for early summer 2026. The feature roadmap (hybrid cloud, multimodal, agentic AI support) is ambitious.

NetApp will need to ship on time and at quality to maintain credibility with enterprise buyers who’ve heard plenty of AI promises from storage vendors over the past 2 years.

The Bottom Line

NetApp is making a coherent, multi-layered argument: you need extreme performance storage (EF-Series), you need intelligent data management that makes your data AI-ready without moving it (AIDE), and you need it all running on a platform that scales without lock-in (the NetApp data platform with AFX).

Layer the NVIDIA partnership on top and you get an enterprise AI infrastructure story that’s hard to dismiss.

The company has been at this for 3 decades now, which is both a strength (proven reliability, 1 million+ installations) and a vulnerability (the market moves fast, and incumbents can be slow).

What’s encouraging is that NetApp isn’t just bolting AI buzzwords onto legacy products. The architectural decisions (disaggregation, in-place enrichment, open ISV integrations) suggest genuine strategic thinking about where enterprise data infrastructure needs to go.

Whether the market rewards that thinking depends on execution. But the blueprint? It’s the most coherent AI data infrastructure story I’ve seen from an enterprise storage vendor this year – and the NFL tie-in doesn’t hurt!

This infographic created by Gemini Nano Banana 2, based on this article: